cloudNet@ 팀의 가시다 님이 진행하는 쿠버네티스 CI/CD 스터디 3주차 내용입니다.

환경 구성

1. 쿠버네티스 환경 배포(kind)

- 클러스터 배포

# 클러스터 배포 전 확인

sudo su -

docker ps

mkdir ~/cicd-labs

cd ~/cicd-labs

#

kind create cluster --name myk8s --image kindest/node:v1.32.8 --config - <<EOF

kind: Cluster

apiVersion: kind.x-k8s.io/v1alpha4

networking:

apiServerAddress: "0.0.0.0"

nodes:

- role: control-plane

extraPortMappings:

- containerPort: 30000

hostPort: 30000

- containerPort: 30001

hostPort: 30001

- containerPort: 30002

hostPort: 30002

- containerPort: 30003

hostPort: 30003

- role: worker

EOF

# 확인

kind get nodes --name myk8s

kubens default

# 컨트롤플레인/워커 노드(컨테이너) 확인 : 도커 컨테이너 이름은 myk8s-control-plane , myk8s-worker 임을 확인

docker ps

docker images

# 디버그용 내용 출력에 ~/.kube/config 권한 인증 로드

kubectl get pod -v6

# kube config 파일 확인

cat ~/.kube/config

...

server: https://0.0.0.0:40925 # << 포트 정보 메모

# k8s api 호출을 위한 IP 확인

ifconfig eth0

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 172.27.165.105 netmask 255.255.240.0 broadcast 172.27.175.255 # << IP 메모

inet6 fe80::215:5dff:fe59:9d43 prefixlen 64 scopeid 0x20<link>

ether 00:15:5d:59:9d:43 txqueuelen 1000 (Ethernet)

RX packets 9379 bytes 13903481 (13.9 MB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 3274 bytes 238716 (238.7 KB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

docker ps

## 위 메모한 IP:Port 호출 확인

curl -k https://172.27.165.105:40925/version

{

"major": "1",

"minor": "32",

"gitVersion": "v1.32.8",

"gitCommit": "2e83bc4bf31e88b7de81d5341939d5ce2460f46f",

"gitTreeState": "clean",

"buildDate": "2025-08-13T14:21:22Z",

"goVersion": "go1.23.11",

"compiler": "gc",

"platform": "linux/amd64"- kube-ops-view 설치

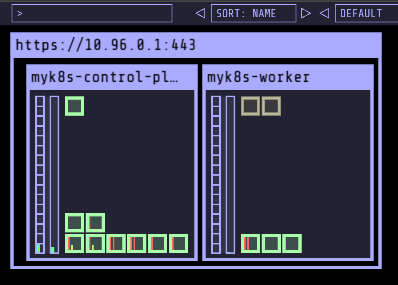

# kube-ops-view

# helm show values geek-cookbook/kube-ops-view

helm repo add geek-cookbook https://geek-cookbook.github.io/charts/

helm install kube-ops-view geek-cookbook/kube-ops-view --version 1.2.2 --set service.main.type=NodePort,service.main.ports.http.nodePort=30001 --set env.TZ="Asia/Seoul" --namespace kube-system

# 설치 확인

kubectl get deploy,pod,svc,ep -n kube-system -l app.kubernetes.io/instance=kube-ops-view

# kube-ops-view 접속 URL 확인 (1.5 , 2 배율)

open "http://<각자 자신의 WSL Ubuntu Eth0 IP>:30001/#scale=1.5"

open "http://<각자 자신의 WSL Ubuntu Eth0 IP>:30001/#scale=2"

# 클러스터 삭제

kind delete cluster --name myk8s2. docker compose

- Jenkins, gogs 컨테이너 기동

# 작업 디렉토리 생성 후 이동

mkdir cicd-labs

cd cicd-labs

# kind 설치를 먼저 진행하여 docker network(kind) 생성 후 아래 Jenkins,gogs 생성 할 것

# docker network 확인 : kind 를 사용

docker network ls

...

7e8925d46acb kind bridge loca

...

#

cat <<EOT > docker-compose.yaml

services:

jenkins:

container_name: jenkins

image: jenkins/jenkins

restart: unless-stopped

networks:

- kind

ports:

- "8080:8080"

- "50000:50000"

volumes:

- /var/run/docker.sock:/var/run/docker.sock

- jenkins_home:/var/jenkins_home

gogs:

container_name: gogs

image: gogs/gogs

restart: unless-stopped

networks:

- kind

ports:

- "10022:22"

- "3000:3000"

volumes:

- gogs-data:/data

volumes:

jenkins_home:

gogs-data:

networks:

kind:

external: true

EOT

# 배포

docker compose up -d

docker compose ps

docker inspect kind

# 기본 정보 확인

for i in gogs jenkins ; do echo ">> container : $i <<"; docker compose exec $i sh -c "whoami && pwd"; echo; done

# 도커를 이용하여 각 컨테이너로 접속

docker compose exec jenkins bash

exit

docker compose exec gogs bash

exit- Jenkins 컨테이너 초기 설정

# Jenkins 초기 암호 확인

docker compose exec jenkins cat /var/jenkins_home/secrets/initialAdminPassword

# Jenkins 웹 접속 주소 확인 : 계정 / 암호 입력 >> admin / qwe123

웹 브라우저에서 http://<WSL2 Ubuntu Eth0 IP>:8080 접속 # Windows

# (참고) 로그 확인 : 플러그인 설치 과정 확인

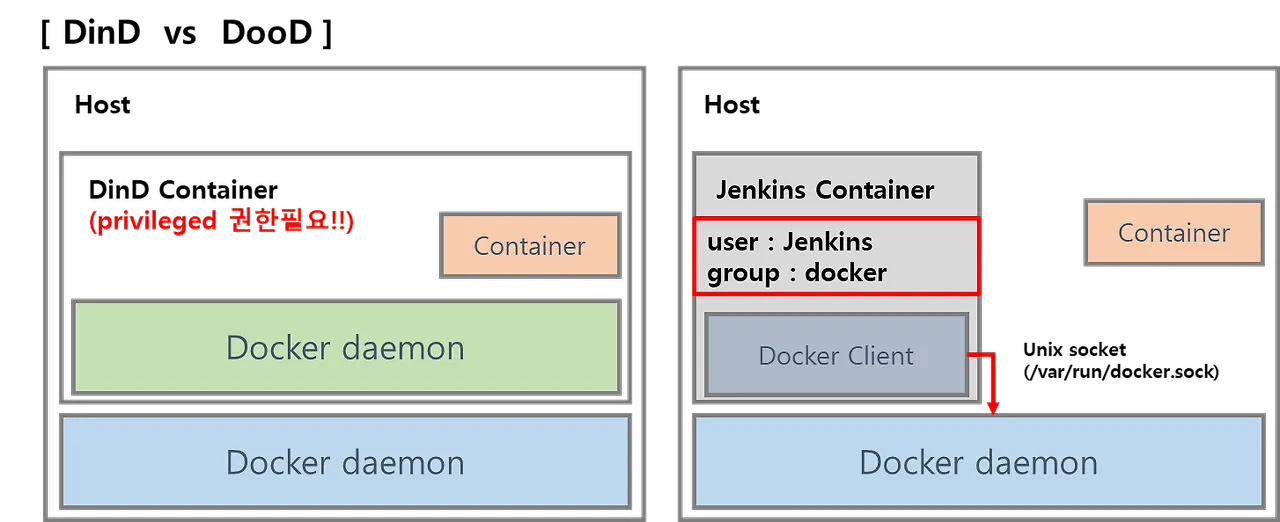

docker compose logs jenkins -f- Jenkins 컨테이너에서 호스트에 도커 데몬 사용 설정

# Jenkins 컨테이너 내부에 도커 실행 파일 설치

docker compose exec --privileged -u root jenkins bash

-----------------------------------------------------

id

curl -fsSL https://download.docker.com/linux/debian/gpg -o /etc/apt/keyrings/docker.asc

chmod a+r /etc/apt/keyrings/docker.asc

echo \

"deb [arch=$(dpkg --print-architecture) signed-by=/etc/apt/keyrings/docker.asc] https://download.docker.com/linux/debian \

$(. /etc/os-release && echo "$VERSION_CODENAME") stable" | \

tee /etc/apt/sources.list.d/docker.list > /dev/null

apt-get update && apt install docker-ce-cli curl tree jq yq -y

docker info

docker ps

which docker

# Jenkins 컨테이너 내부에서 root가 아닌 jenkins 유저도 docker를 실행할 수 있도록 권한을 부여

groupadd -g 989 -f docker # Windows WSL2(Container) >> cat /etc/group 에서 docker 그룹ID를 지정

chgrp docker /var/run/docker.sock

ls -l /var/run/docker.sock

usermod -aG docker jenkins

cat /etc/group | grep docker

exit

--------------------------------------------

# Jenkins 컨테이너 재기동으로 위 설정 내용을 Jenkins app 에도 적용 필요

docker compose restart jenkins

# jenkins user로 docker 명령 실행 확인

docker compose exec jenkins id

docker compose exec jenkins docker info

docker compose exec jenkins docker ps

docker compose exec jenkins cat /etc/groupwindow환경에서는 root유저가 아닌 jenkis유저도 docker를 실행할 수 있도록 권한을 줘야 한다.

# 1. 컨테이너 내부에 docker 그룹 확인

cat /etc/group | grep docker

→ 아무 결과 없음

# 2. 호스트 Docker 소켓에 그룹 권한 확인

ls -l /var/run/docker.sock

srw-rw---- 1 root docker 0 Oct 31 00:28 /var/run/docker.sock

- 권한 확인 시 root 또는 docker 그룹만 접근이 가능하다,

- Jenkins 컨테이너에는 Docker 그룹이 없어, /var/run/docker.sock에 접근할 권한이 없는 상태다

# 3. 호스트의 Docker 소켓 GID 확인

stat -c '%g' /var/run/docker.sock

988

# 4. jenkins 컨테이너 내부에 동일한 GID의 그룹 생성

groupadd -g 988 -f docker

cat /etc/group | grep docker

docker:x:988:

usermod -aG docker jenkins

cat /etc/group | grep docker

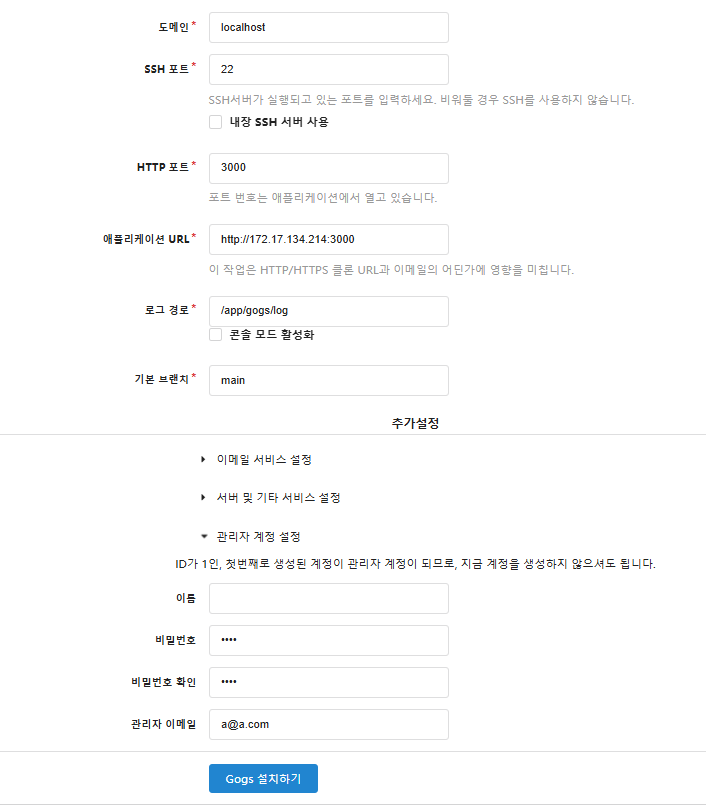

3. gogs

- Gogs 컨테이너 초기 설정

# 초기 설정 웹 접속

open "http://127.0.0.1:3000/install" # macOS

웹접속 "http://<Ubuntu Eth0 IP>:3000/install" # Windows- 초기 설정

- 데이터베이스 유형 : SQLite3

- 애플리케이션 URL : http://<각자 자신의 IP>:3000/

- 기본 브랜치 : main

- 관리자 계정 설정 클릭 : 이름(계정명 - 닉네임 사용 devops), 비밀번호(계정암호 qwe123), 이메일 입력

- 나머지는 default

- 나머지는 default

- Gogs 설치하기 클릭 ⇒ 관리자 계정으로 로그인 후 접속

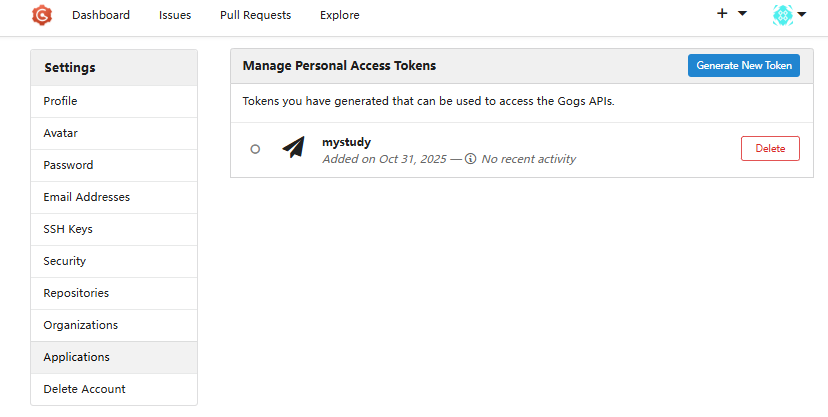

- 로그인 후 → Your Settings → Applications : Generate New Token 클릭 - Token Name(devops) ⇒ Generate Token 클릭 : 메모 필요

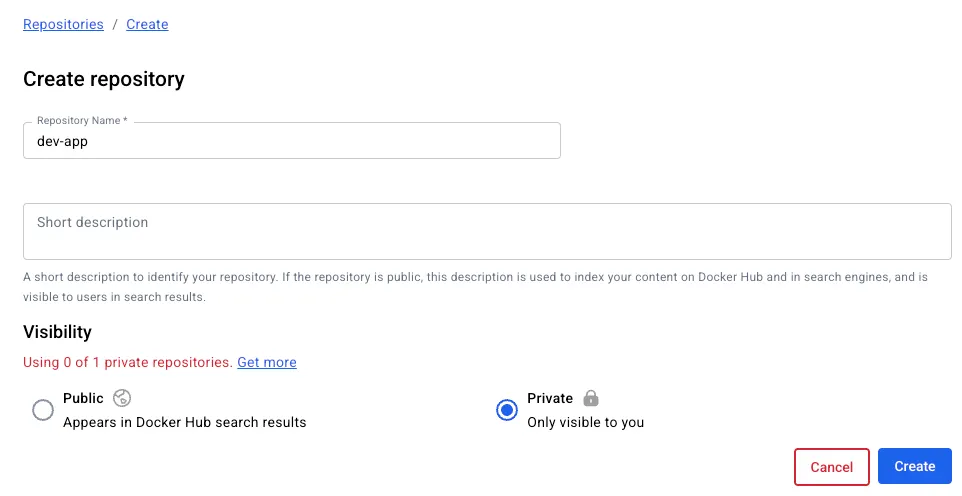

- New Repository 1 : 개발팀용

- Repository Name : dev-app

- Visibility : (Check) This repository is Private ← 가시성 위에것 체크

- .gitignore : Python

- Readme : Default → (Check) initialize this repository with selected files and template

- New Repository 2 : 데브옵스팀용

- Repository Name : ops-deploy

- Visibility : (Check) This repository is Private

- .gitignore : Python

- Readme : Default → (Check) initialize this repository with selected files and template

- Gogs 실습을 위한 저장소 설정

docker exec -it gogs bash

----------------------------------------------

# (옵션) GIT 인증 정보 초기화

git credential-cache exit

#

TMOUT=0

pwd

ls

cd /data # 호스트 mount 볼륨 공유 경로

#

git config --list --show-origin

#

TOKEN=<각자 Gogs Token>

TOKEN=7aa8e88fac50bb72cbed54d212f1262342108261

MyIP=<각자 자신의 IP> # mac(PC IP), windows(ubuntu eth0)

MyIP=192.168.254.110

git clone <각자 Gogs dev-app repo 주소>

git clone http://devops:$TOKEN@$MyIP:3000/devops/dev-app.git

Cloning into 'dev-app'...

...

#

cd /data/dev-app

#

git --no-pager config --local --list

git config --local user.name "devops"

git config --local user.email "a@a.com"

git config --local init.defaultBranch main

git config --local credential.helper store

git --no-pager config --local --list

cat .git/config

#

git --no-pager branch

git remote -v

# server.py 파일 작성

cat > server.py <<EOF

from http.server import ThreadingHTTPServer, BaseHTTPRequestHandler

from datetime import datetime

import socket

class RequestHandler(BaseHTTPRequestHandler):

def do_GET(self):

match self.path:

case '/':

now = datetime.now()

hostname = socket.gethostname()

response_string = now.strftime("The time is %-I:%M:%S %p, VERSION 0.0.1\n")

response_string += f"Server hostname: {hostname}\n"

self.respond_with(200, response_string)

case '/healthz':

self.respond_with(200, "Healthy")

case _:

self.respond_with(404, "Not Found")

def respond_with(self, status_code: int, content: str) -> None:

self.send_response(status_code)

self.send_header('Content-type', 'text/plain')

self.end_headers()

self.wfile.write(bytes(content, "utf-8"))

def startServer():

try:

server = ThreadingHTTPServer(('', 80), RequestHandler)

print("Listening on " + ":".join(map(str, server.server_address)))

server.serve_forever()

except KeyboardInterrupt:

server.shutdown()

if __name__== "__main__":

startServer()

EOF

# (참고) python 실행 확인

python3 server.py

curl localhost

curl localhost/healthz

# Dockerfile 생성

cat > Dockerfile <<EOF

FROM python:3.12

ENV PYTHONUNBUFFERED 1

COPY . /app

WORKDIR /app

CMD python3 server.py

EOF

# VERSION 파일 생성

echo "0.0.1" > VERSION

#

tree

git status

git add .

git commit -m "Add dev-app"

git push -u origin main

...

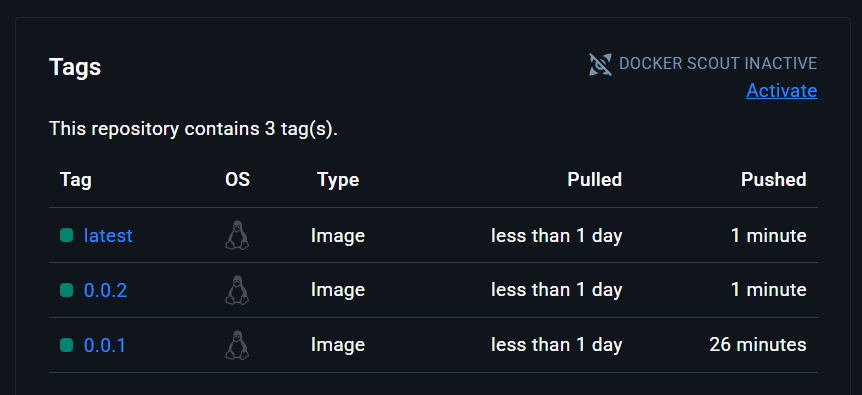

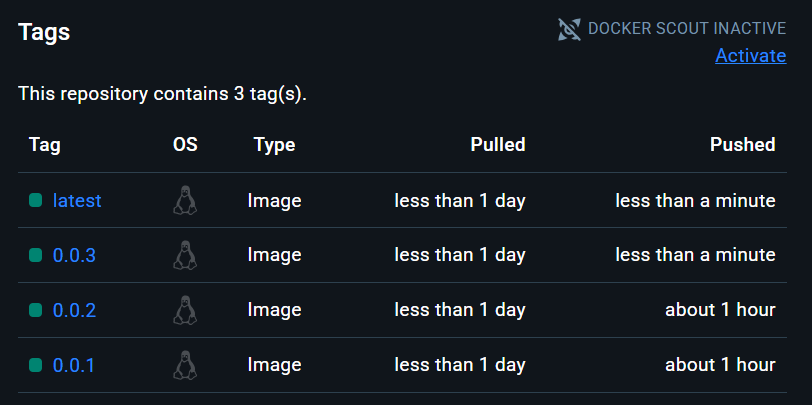

4. dockerhub token & Private Repository 생성

- token은 저번에 사용한 토큰을 사용했다.

- Private Repository 생성(dev-app)

-

환경 삭제

docker compose down --remove-orphans && rm -rf gogs-data jenkins_home

Jenkins CI + K8S(Kind)

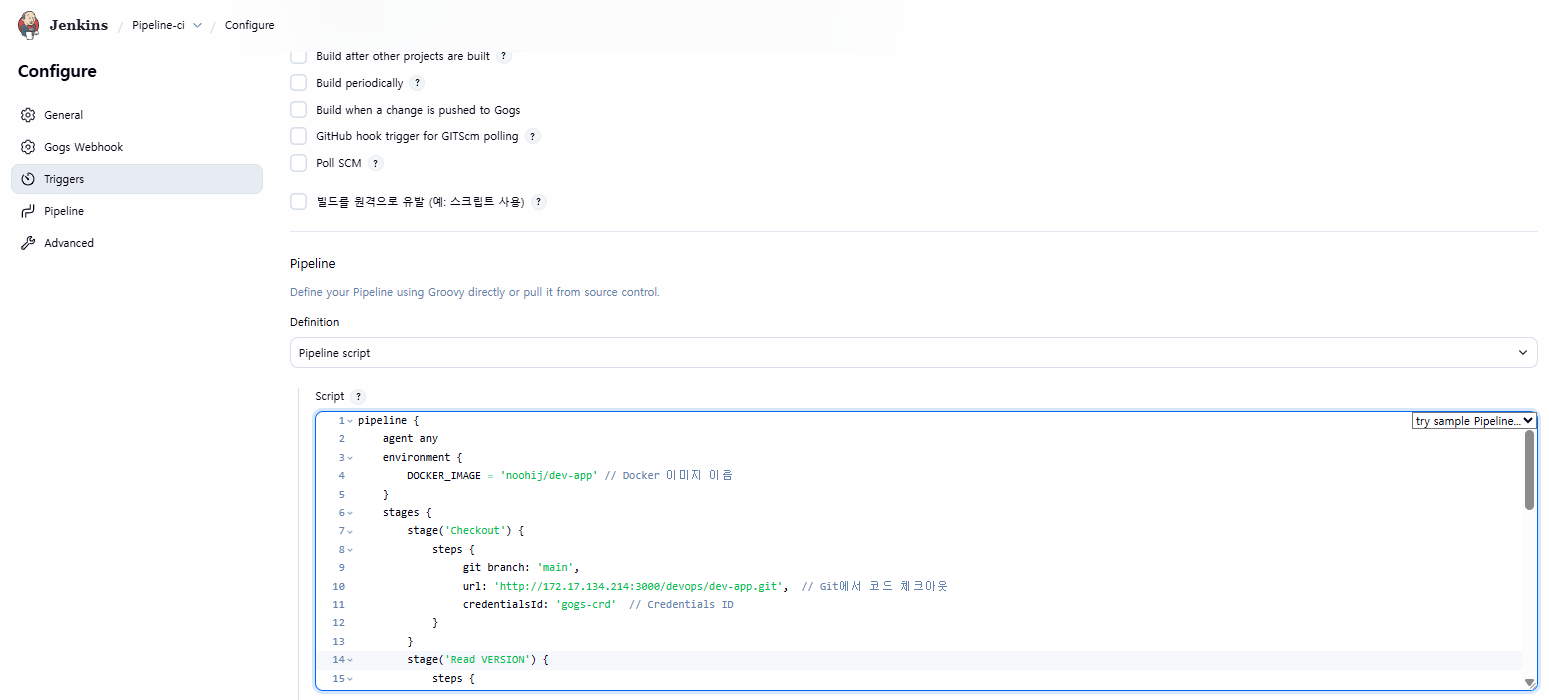

1. Jenkins CI Pipeline

- Jenkins 설정 : Plugin 설치, 자격증명 설정.

- 자격증명 설정 : Jenkins 관리 → Credentials → Globals → Add Credentials

- Gogs Repo 자격증명 설정 : gogs-crd

- Kind : Username with password

- Username : devops

- Password : <Gogs 토큰>

- ID : gogs-crd

- 도커 허브 자격증명 설정 : dockerhub-crd

- Kind : Username with password

- Username : <도커 계정명>

- Password : <도커 계정 암호 혹은 토큰>

- ID : dockerhub-crd

- Gogs Repo 자격증명 설정 : gogs-crd

- Jenkins Item 생성(Pipeline)

- appImage.push 는 각각 ‘버전 tag, latest tag’ 수행한다

pipeline {

agent any

environment {

DOCKER_IMAGE = '<자신의 도커 허브 계정>/dev-app' // Docker 이미지 이름

}

stages {

stage('Checkout') {

steps {

git branch: 'main',

url: 'http://<자신의 IP>:3000/devops/dev-app.git', // Git에서 코드 체크아웃

credentialsId: 'gogs-crd' // Credentials ID

}

}

stage('Read VERSION') {

steps {

script {

// VERSION 파일 읽기

def version = readFile('VERSION').trim()

echo "Version found: ${version}"

// 환경 변수 설정

env.DOCKER_TAG = version

}

}

}

stage('Docker Build and Push') {

steps {

script {

docker.withRegistry('https://index.docker.io/v1/', 'dockerhub-crd') {

// DOCKER_TAG 사용

def appImage = docker.build("${DOCKER_IMAGE}:${DOCKER_TAG}")

appImage.push()

appImage.push("latest")

}

}

}

}

}

post {

success {

echo "Docker image ${DOCKER_IMAGE}:${DOCKER_TAG} has been built and pushed successfully!"

}

failure {

echo "Pipeline failed. Please check the logs."

}

}

}

- Deploying to Kubernetes

# 디플로이먼트 오브젝트 배포 : 리플리카(파드 2개), 컨테이너 이미지 >> 아래 도커 계정 부분만 변경해서 배포해보자

DHUSER=<도커 허브 계정명>

DHUSER=gasida

cat <<EOF | kubectl apply -f -

apiVersion: apps/v1

kind: Deployment

metadata:

name: timeserver

spec:

replicas: 2

selector:

matchLabels:

pod: timeserver-pod

template:

metadata:

labels:

pod: timeserver-pod

spec:

containers:

- name: timeserver-container

image: docker.io/$DHUSER/dev-app:0.0.1

livenessProbe:

initialDelaySeconds: 30

periodSeconds: 30

httpGet:

path: /healthz

port: 80

scheme: HTTP

timeoutSeconds: 5

failureThreshold: 3

successThreshold: 1

EOF

watch -d kubectl get deploy,rs,pod -o wide

# 배포 상태 확인 : kube-ops-view 웹 확인

kubectl get events -w --sort-by '.lastTimestamp'

kubectl get deploy,pod -o wide

kubectl describe pod

...

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled 53s default-scheduler Successfully assigned default/timeserver-7cf7db8f6c-mtvn7 to myk8s-worker

Normal BackOff 19s (x2 over 50s) kubelet Back-off pulling image "docker.io/gasida/dev-app:latest"

Warning Failed 19s (x2 over 50s) kubelet Error: ImagePullBackOff

Normal Pulling 4s (x3 over 53s) kubelet Pulling image "docker.io/gasida/dev-app:latest"

Warning Failed 3s (x3 over 51s) kubelet Failed to pull image "docker.io/gasida/dev-app:latest": failed to pull and unpack image "docker.io/gasida/dev-app:latest": failed to resolve reference "docker.io/gasida/dev-app:latest": pull access denied, repository does not exist or may require authorization: server message: insufficient_scope: authorization failed

Warning Failed 3s (x3 over 51s) kubelet Error: ErrImagePull

TROUBLESHOOTING : image pull error (ErrImagePull / ErrImagePullBackOff)

- 컨테이너 이미지 이름이나 태그를 잘못 입력한 경우 발생

- 혹은 이미지 저장소에 이미지가 없거나, 이미지 가져오는 자격 증명이 없는 경우에 발생

- 이번 에러는 자격증명을 미등록으로 인한 문제 → 등록 진행

# k8s secret : 도커 자격증명 설정

kubectl get secret -A # 생성 시 타입 지정

DHUSER=<도커 허브 계정>

DHPASS=<도커 허브 암호 혹은 토큰>

echo $DHUSER $DHPASS

DHUSER=gasida

DHPASS=dckr_pat_JLKruUO5Ee8BGWhqxgRz50_jmT0

echo $DHUSER $DHPASS

kubectl create secret docker-registry dockerhub-secret \

--docker-server=https://index.docker.io/v1/ \

--docker-username=$DHUSER \

--docker-password=$DHPASS

# 확인 : base64 인코딩 확인

kubectl get secret

kubectl get secret dockerhub-secret -o jsonpath='{.data.\.dockerconfigjson}' | base64 -d | jq

# 디플로이먼트 오브젝트 업데이트 : 시크릿 적용 >> 아래 도커 계정 부분만 변경해서 배포해보자

cat <<EOF | kubectl apply -f -

apiVersion: apps/v1

kind: Deployment

metadata:

name: timeserver

spec:

replicas: 2

selector:

matchLabels:

pod: timeserver-pod

template:

metadata:

labels:

pod: timeserver-pod

spec:

containers:

- name: timeserver-container

image: docker.io/$DHUSER/dev-app:0.0.1

livenessProbe:

initialDelaySeconds: 30

periodSeconds: 30

httpGet:

path: /healthz

port: 80

scheme: HTTP

timeoutSeconds: 5

failureThreshold: 3

successThreshold: 1

imagePullSecrets:

- name: dockerhub-secret

EOF

watch -d kubectl get deploy,rs,pod -o wide

# 확인

kubectl get events -w --sort-by '.lastTimestamp'

kubectl get deploy,pod

- Deploying the Service

# 서비스 생성

cat <<EOF | kubectl apply -f -

apiVersion: v1

kind: Service

metadata:

name: timeserver

spec:

selector:

pod: timeserver-pod

ports:

- port: 80

targetPort: 80

protocol: TCP

nodePort: 30000

type: NodePort

EOF

#

kubectl get service,ep timeserver -owide

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE SELECTOR

service/timeserver NodePort 10.96.236.37 <none> 80:30000/TCP 25s pod=timeserver-pod

NAME ENDPOINTS AGE

endpoints/timeserver 10.244.1.2:80,10.244.2.2:80,10.244.3.2:80 25s

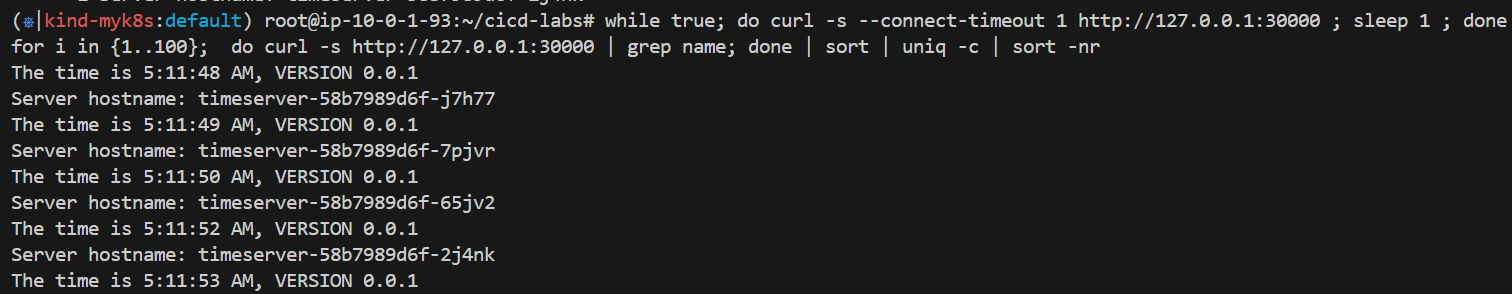

# Service(NodePort)로 접속 확인 "노드IP:NodePort"

curl http://127.0.0.1:30000

curl http://127.0.0.1:30000

curl http://127.0.0.1:30000/healthz

# 반복 접속 해두기 : 부하분산 확인

while true; do curl -s --connect-timeout 1 http://127.0.0.1:30000 ; sleep 1 ; done

for i in {1..100}; do curl -s http://127.0.0.1:30000 | grep name; done | sort | uniq -c | sort -nr

# 파드 복제복 증가 : service endpoint 대상에 자동 추가

kubectl scale deployment timeserver --replicas 4

kubectl get service,ep timeserver -owide

# 반복 접속 해두기 : 부하분산 확인

while true; do curl -s --connect-timeout 1 http://127.0.0.1:30000 ; sleep 1 ; done

for i in {1..100}; do curl -s http://127.0.0.1:30000 | grep name; done | sort | uniq -c | sort -nr

- k8s Deploying an application with Jenkins

- 샘플 앱 server.py 코드 변경 → 젠킨스(지금 빌드 실행) : 새 0.0.2 버전 태그로 컨테이너 이미지 빌드 → 컨테이너 저장소 Push ⇒ k8s deployment 업데이트 배포

# VERSION 변경 : 0.0.2

# server.py 변경 : 0.0.2

echo "0.0.2" > VERSION

git add . && git commit -m "VERSION $(cat VERSION) Changed" && git push -u origin main

- 태그의 버전 정보 사용

# 반복 접속 해두기 : 부하분산 확인

while true; do curl -s --connect-timeout 1 http://127.0.0.1:30000 ; sleep 1 ; done

for i in {1..100}; do curl -s http://127.0.0.1:30000 | grep name; done | sort | uniq -c | sort -nr

#

kubectl set image deployment timeserver timeserver-container=$DHUSER/dev-app:0.0.2 && watch -d "kubectl get deploy,ep timeserver -owide; echo; kubectl get rs,pod"

# 롤링 업데이트 확인

watch -d kubectl get deploy,rs,pod,svc,ep -owide

kubectl get deploy,rs,pod,svc,ep -owide

# kubectl get deploy $DEPLOYMENT_NAME

kubectl get deploy timeserver

kubectl get pods -l pod=timeserver-pod

#

curl http://127.0.0.1:30000- Gogs Webhooks 설정

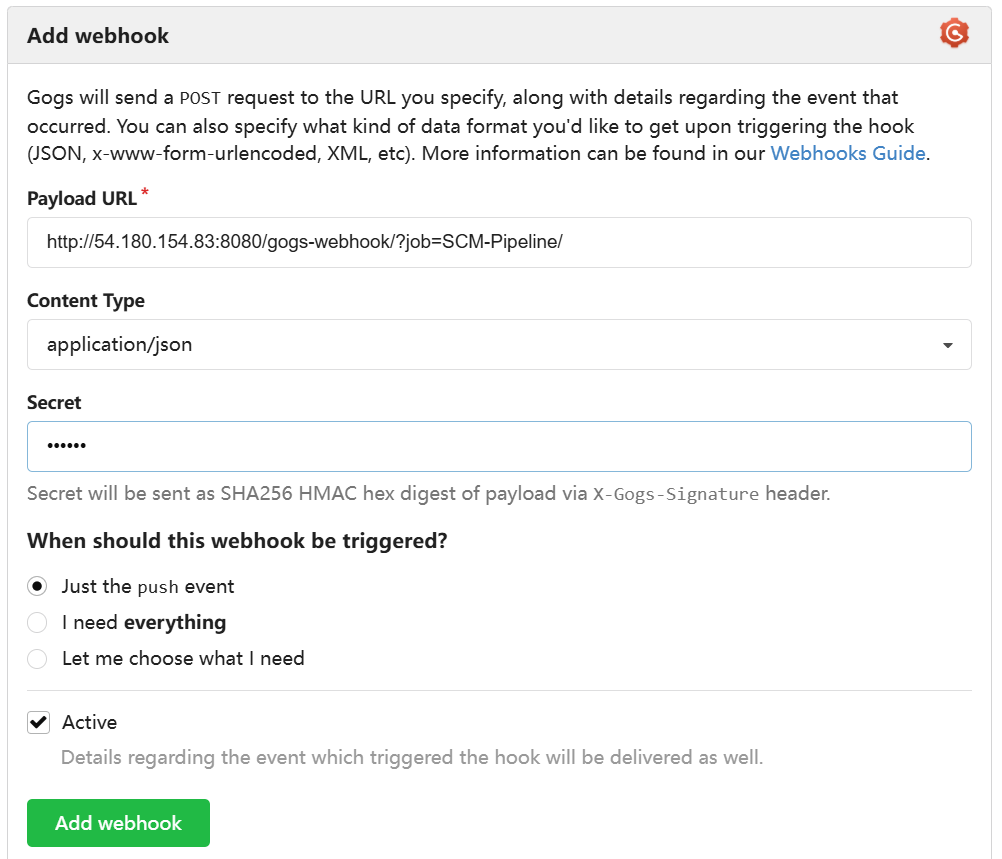

- gogs 에 /data/gogs/conf/app.ini 파일 수정 후 컨테이너 재기동

- gogs 에 Webhooks 설정 : Jenkins job Trigger - [dev-app] - Setting → Webhooks → Gogs 클릭

- Payload URL : http://192.168.254.110:8080/gogs-webhook/?job=**SCM-Pipeline**/ # 각자 자신의 IP

- Content Type : application/json

- Secret : qwe123

- When should this webhook be triggered? : Just the push event

- Active : Check ⇒ Add webhook → Test Delivery 시도 시, 현재는 Jenkins 미설정 상태로 404 실패

[security]

INSTALL_LOCK = true

SECRET_KEY = j2xaUPQcbAEwpIu

LOCAL_NETWORK_ALLOWLIST = 192.168.254.110 # 각자 자신의 IP

# 재기동

docker compose restart gogs

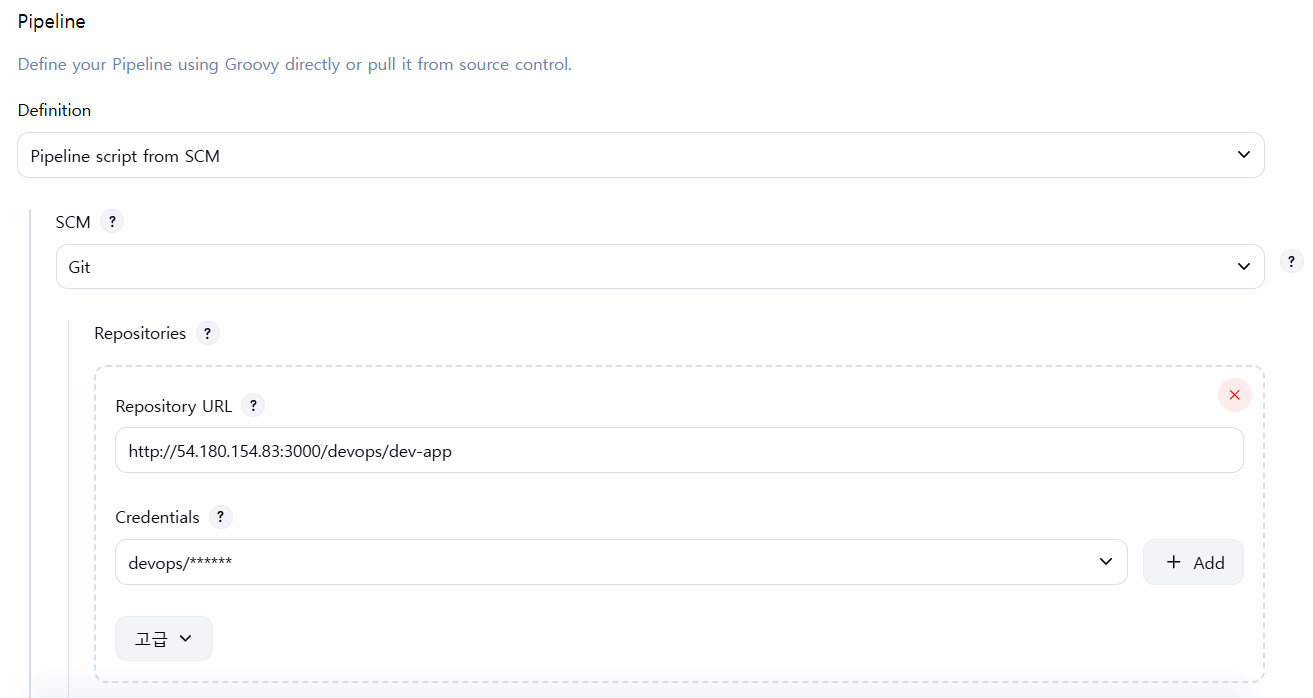

- Jenkins Item 생성(Pipeline) : item name(SCM-Pipeline)

-

- GitHub project : http://***자신의 IP>***:3000/***<Gogs 계정명>***/dev-app ← .git 은 제거

- *GitHub project : http://54.180.154.83:3000/devops/dev-app*

- Use Gogs secret : qwe123

- Build Triggers : Build when a change is pushed to Gogs 체크

- Pipeline script from SCM

- SCM : Git

- Repo URL(http://***<mac IP>***:3000/***<Gogs 계정명>***/dev-app)

- Credentials(devops/***)

- Branch(*/main)

- Script Path : Jenkinsfile

- SCM : Git

- GitHub project : http://***자신의 IP>***:3000/***<Gogs 계정명>***/dev-app ← .git 은 제거

-

- Jenkinsfile 작성 후 Git push

- Jenkinsfile 및 소스 코드 작업

# Jenkinsfile 빈 파일 작성

tree

├── Dockerfile

├── README.md

├── VERSION

└── server.py

touch Jenkinsfile

# VERSION 파일 : 0.0.3 수정

# server.py 파일 : 0.0.3 수정

pipeline {

agent any

environment {

DOCKER_IMAGE = '<자신의 도커 허브 계정>/dev-app' // Docker 이미지 이름

}

stages {

stage('Checkout') {

steps {

git branch: 'main',

url: 'http://<자신의 IP>:3000/devops/dev-app.git', // Git에서 코드 체크아웃

credentialsId: 'gogs-crd' // Credentials ID

}

}

stage('Read VERSION') {

steps {

script {

// VERSION 파일 읽기

def version = readFile('VERSION').trim()

echo "Version found: ${version}"

// 환경 변수 설정

env.DOCKER_TAG = version

}

}

}

stage('Docker Build and Push') {

steps {

script {

docker.withRegistry('https://index.docker.io/v1/', 'dockerhub-crd') {

// DOCKER_TAG 사용

def appImage = docker.build("${DOCKER_IMAGE}:${DOCKER_TAG}")

appImage.push()

appImage.push("latest")

}

}

}

}

}

post {

success {

echo "Docker image ${DOCKER_IMAGE}:${DOCKER_TAG} has been built and pushed successfully!"

}

failure {

echo "Pipeline failed. Please check the logs."

}

}

}

# 작성된 파일 Push

git add . && git commit -m "VERSION $(cat VERSION) Changed" && git push -u origin main

- 신규 버전 적용

# 신규 버전 적용

kubectl set image deployment timeserver timeserver-container=$DHUSER/dev-app:0.0.3 && while true; do curl -s --connect-timeout 1 http://127.0.0.1:30000 ; sleep 1 ; done

# 확인

watch -d "kubectl get deploy,ep timeserver; echo; kubectl get rs,pod"

Jenkins CD + K8S

- Jenkins 컨테이너 내부에 툴 설치 : kubectl(v1.32), helm

# Install kubectl, helm

docker compose exec --privileged -u root jenkins bash

--------------------------------------------

# kubectl download

#curl -LO "https://dl.k8s.io/release/$(curl -L -s https://dl.k8s.io/release/stable.txt)/bin/linux/arm64/kubectl" # macOS

#curl -LO "https://dl.k8s.io/release/$(curl -L -s https://dl.k8s.io/release/stable.txt)/bin/linux/amd64/kubectl" # WindowOS

curl -LO "https://dl.k8s.io/release/v1.32.8/bin/linux/arm64/kubectl" # macOS

curl -LO "https://dl.k8s.io/release/v1.32.8/bin/linux/amd64/kubectl" # WindowOS

install -o root -g root -m 0755 kubectl /usr/local/bin/kubectl

kubectl version --client=true

#

curl https://raw.githubusercontent.com/helm/helm/main/scripts/get-helm-3 | bash

helm version

exit

--------------------------------------------

docker compose exec jenkins kubectl version --client=true

docker compose exec jenkins helm version- Jenkins 설정 : 자격증명 설정 - k8s-crd

- 자격증명 설정 : Jenkins 관리 → Credentials → Globals → Add Credentials

1. myk8s-control-plane 컨테이너 IP 확인

# myk8s-control-plane 컨테이너 IP 확인

docker inspect myk8s-control-plane | grep IPAddress

"IPAddress": "192.168.97.3",

# Jenkins 컨테이너에서 myk8s-control-plane api 호출 확인

docker exec -it jenkins curl https://192.168.97.3:6443/version -k

...2. k8s(kind) 자격증명 설정 : k8s-crd

- Kind : Secret file

- File : <kubeconfig 파일 업로드>

- ID : k8s-crd

- 메모장으로 직접 작성 후 업로드

apiVersion: v1

clusters:

- cluster:

certificate-authority-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURCVENDQWUyZ0F3SUJBZ0lJQTdOSnBqU3NlRm93RFFZSktvWklodmNOQVFFTEJRQXdGVEVUTUJFR0ExVUUKQXhNS2EzVmlaWEp1WlhSbGN6QWVGdzB5TlRFd01qVXdPVEUyTlRGYUZ3MHpOVEV3TWpNd09USXhOVEZhTUJVeApFekFSQmdOVkJBTVRDbXQxWW1WeWJtVjBaWE13Z2dFaU1BMEdDU3FHU0liM0RRRUJBUVVBQTRJQkR3QXdnZ0VLCkFvSUJBUURTOEcyMDVUeXQzYm1jMGtKQmtWMjMvdmV3VWp2MFlCb29yeDNhVk00UUtXdzVuWDVZOHFVSmNSa2IKUkJSc2dYaGRTWXU1RkpOblFlcE1xcEVRZjQwQXErSlZGanF4RElSL2xVejUrSWRyU2lpQ1NicGZPbHB5OU51NQoxTGNWY3JzY2VaMkpMUEovRjNIcGZPR2FROTczRXRUR3pTYkhIUVNlN0RDazBzZEN2d0xLMFQ4S1pvb240dTIrCnpsTG5BalIwcUFhTkFyWWtRdUVNaUpBb1BSMW94Sjd0TnZEaXhYNlF6R2RKOEZWYkRFQkFTUmlYcysrdzZMOVMKSzhJRTZnN2k4ZXFmSHVFM3ZWUG0xRmt3enFxcURyTXB6dEFLV2JQYlhzZEt0VERWQTdsUHdNRXNNWUF1eFpDVwpIRkFjUDRLYkZyRGNxVlY1V0ppVWRLRXdjcDU5QWdNQkFBR2pXVEJYTUE0R0ExVWREd0VCL3dRRUF3SUNwREFQCkJnTlZIUk1CQWY4RUJUQURBUUgvTUIwR0ExVWREZ1FXQkJRVDNvMkJKUHFLYXJlMmJoVVRqYTFtOWxNSDlUQVYKQmdOVkhSRUVEakFNZ2dwcmRXSmxjbTVsZEdWek1BMEdDU3FHU0liM0RRRUJDd1VBQTRJQkFRQW9nMEN0ZlgzSwpzU0pIblo3Vkgvai9CUnNCUEY2Z1hieUtJTEtNeU8vblgxejF1UEJGbklPZ0QvME4zUnJiVStYVVRQc2djTGgrCjZMY0xJSUhmNWpzRlRkL0prVDI0VXZkVzRxcnZqSWRWYU5GbS9Gcm91YkFoU1Y0UEhSMHFBaCtWODY5U1llMisKMHBUL1REVDkwNklqRzVGb2NhZlFBZkVrWUVQNEptMXhXdloxVXNFNlp3ZVVWNVUvUzZNWmVDZC9UZU1SQ0cybApwMTVLNEVWTGdlVGwzK1dCUENBYnBIK0hTa0grdi96OFY2byszYmlrMFdKOVBJZGZuUjZXeENMRDhnaTFzNGZICnpiMm9iYmt0SnlsZEpUUGNyU0wwUGhZMml3ZWtad3R3WWFJNk84aytRckdnUW5PNGp4Q0VUWVN6OVc5ekdoWGcKVW9lU09mdXBGM0hZCi0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0K

server: https://<myk8s-control-plane 컨테이너 IP>:6443 # 자신의 환경에 맞게 변경

name: kind-myk8s

contexts:

- context:

cluster: kind-myk8s

user: kind-myk8s

name: kind-myk8s

current-context: kind-myk8s

kind: Config

users:

- name: kind-myk8s

user:

client-certificate-data: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSURLVENDQWhHZ0F3SUJBZ0lJQTBpUUN6VFJiTm93RFFZSktvWklodmNOQVFFTEJRQXdGVEVUTUJFR0ExVUUKQXhNS2EzVmlaWEp1WlhSbGN6QWVGdzB5TlRFd01qVXdPVEUyTlRGYUZ3MHlOakV3TWpVd09USXhOVEZhTUR3eApIekFkQmdOVkJBb1RGbXQxWW1WaFpHMDZZMngxYzNSbGNpMWhaRzFwYm5NeEdUQVhCZ05WQkFNVEVHdDFZbVZ5CmJtVjBaWE10WVdSdGFXNHdnZ0VpTUEwR0NTcUdTSWIzRFFFQkFRVUFBNElCRHdBd2dnRUtBb0lCQVFDK1pLYkcKOERDUHYzQXJOZmJJYVllKzNsMkN3VXFDaktOaWZXNUtMNWUyQ1ZXSzNJK3I2aHZQTEhUMDFyaHc0cHpVNEFlYwpLbUp2L2UrdnFHMG9Za0FzRWltb1YvZi9rdXVORkkvK1loYmhMMmg2NVhYeXY1Q3BOek9NQWdaN1hkMHZoODRKCndQTmg1MWdpWnRuZ1JPQm0ybEV6ejkvWUllY1dYcjc5TlBDdEE1Yldsa1F5YVdBZEE2UHRFcEZLMUZyRWhuZmwKcS8wV3hPaDc2LzNZa3huTFlNOGp2a2lBZkxObnF3djYycWxJOXQ4MUJORDZ1bXBOSytGdmQ3dXpveWJRMlVRQQprdWVUdE1GMTNuNjE3ZUtva05IVkorZWl6OTlENm9TSTNscldlSEFyYklITmpkNmRLcXFWY0s3dytnQXozaFhqCjNoeS9rQ1RQbWIreWJHY1hBZ01CQUFHalZqQlVNQTRHQTFVZER3RUIvd1FFQXdJRm9EQVRCZ05WSFNVRUREQUsKQmdnckJnRUZCUWNEQWpBTUJnTlZIUk1CQWY4RUFqQUFNQjhHQTFVZEl3UVlNQmFBRkJQZWpZRWsrb3BxdDdadQpGUk9OcldiMlV3ZjFNQTBHQ1NxR1NJYjNEUUVCQ3dVQUE0SUJBUUJJRWNsRGhpc1hjeTVleGlvOEI5R1pVRC9hCkxtQ0ppYjJuL3JlcG9pNk1Va2xVZUN2NUloemVpeFZ4aTMwaUZ2R0dEcmVvcFdJdWNFY0ZINHNZUUUxTTBtbTcKY3VRWFBLMkZDaHUrM1hSbjdGS0VZbEwxa25GSjY0endtbXpTNFRTRVI1MVhYQTV1UnRBVlF5TWE5KzZIeHA4NApDMXpzSlVPZTIwYThLRG03am10cFZ2R0xlNzBmNDJGbmdTallSK3dFaC9hQVI0Wk5vQWZNZnR2QmtEby9JUDIzCjhjbG1hL2t4ZTFVbG5nOGw3OVMrMXBvSnJVd3B0OHFqVWVqYllISC9jcThkWFlncnFwT1lpekRGaVlXOEFibXMKNVJRT1M3OS9nL1o0bzJYRlp3Q3dFVDBxR21iZll2Rnc3c0g4SW4zR1BmdUtlQVZYa3JhTE5JaSsyeG5wCi0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0K

client-key-data: LS0tLS1CRUdJTiBSU0EgUFJJVkFURSBLRVktLS0tLQpNSUlFb3dJQkFBS0NBUUVBdm1TbXh2QXdqNzl3S3pYMnlHbUh2dDVkZ3NGS2dveWpZbjF1U2krWHRnbFZpdHlQCnErb2J6eXgwOU5hNGNPS2MxT0FIbkNwaWIvM3ZyNmh0S0dKQUxCSXBxRmYzLzVMcmpSU1AvbUlXNFM5b2V1VjEKOHIrUXFUY3pqQUlHZTEzZEw0Zk9DY0R6WWVkWUltYlo0RVRnWnRwUk04L2YyQ0huRmw2Ky9UVHdyUU9XMXBaRQpNbWxnSFFPajdSS1JTdFJheElaMzVhdjlGc1RvZSt2OTJKTVp5MkRQSTc1SWdIeXpaNnNMK3RxcFNQYmZOUVRRCitycHFUU3ZoYjNlN3M2TW0wTmxFQUpMbms3VEJkZDUrdGUzaXFKRFIxU2Zub3MvZlErcUVpTjVhMW5od0syeUIKelkzZW5TcXFsWEN1OFBvQU05NFY0OTRjdjVBa3o1bS9zbXhuRndJREFRQUJBb0lCQUU0T3pBV2g4ZmJ0WU5xRwpiR0FzUy9nb0NLU1VCSzFUWmFUNmtkNGVkdyt0OGdGVmZoM1loSUJDMU15UXY5dWdQUFpWeHlqeWc1c3d2RFVEClU0V01DbzFIQVFkQTBhOVpsL01tYkFhNlJuRWFVN2FYSHUxZ05ybjVwTXVSQlFGTk1XTE1SZC9mMktqYUJWdE4KV2FSRitNNlNVVnB4cm05Wkx2b1A3RE81b2JiOG5CNVRtTWZrYmtFb0NXa2VKUGJpM3BpN3h0aEJYd1VKdFZhRgprVHUvaGYyVEE0YlJxNkZhT2VzdDhNb1liTzVwaC96Tk1UODU5bE9tQUEvcWIxRnFaMXk1OXc5WTZ1V0RFWEFIClRFTVFISXpMSjVSeUp0bjNmMmJIWktodUZ2bHBMalNJS0wrWUo3eGlkRHlleU9zanBDZ1FkSGVKai9Pc1Ntc2QKZXNSYjh1a0NnWUVBKyt4ZUVXZUwyYjBzMXpQMnlZVEtqM0NxNXZpWkFzU1dJNWxPMGFQYjByQUZaUTNuMUxTRgp4VVZTaEVDU2RPWkp2R0U5V3JmWVoxZVhEODl6OWwraklmLzhsZis3L2NlMlIwRDNTbm9KSXRoZ05LZFNSR1RnCjUwdUxxWTZIMmlJMkFWaVRMNFh1aXp1TDdWcVpTdTdpbGtqczhHVmpTcFpGeWZXd1dyZzN5aHNDZ1lFQXdYbGkKdGNQamw3Zi9CVEU4OUg4UlpJdmk5ckR6eFo2UE5IaTI0WG9qR3ZiTW1rUzlvTnpTQ1UybWpZb0tqUEp4N1ZOMQpVRWVpbStINnptTHRoK240QnZWWEs0SkJMR2tXVmRIRkZTSFFDaXNiS2Q1Mk5JNnlUODJ5cUMyRjdZWmM4VkZmCmw3MDIyZXE5dFRUeGlnRmhqamliSlBFSCtDTHhVNzFicmpDWHByVUNnWUVBOUd4M01HZkl0cS9uSzcyTk9pU08KNi9FaXdBcC9Xc0lsOGRBek93L0NubjZPSFdnS2dNUE43b0s5OXZjM29oZzFmb0xTSm95dGVFYnhtRE50amFOcApBZm4rdGdKMEFWeWRyRENLUGtaOVNzT3BSQ3o3a3NSVnVkUUFZN2lZY3JveXI4ZWl0cjg1blBsZllDRkJEZ1VNCjcwYytMRmdTcURGNDhPUmlBUU12amZVQ2dZQjBRNVNzOUEyVnc4MHRlcHhOdFBwbnVLUm9hSWZsVHRaeHlzVGoKbEhqNklDdHQzVGN6THQvTkZXdXNETE13WmhWT2IrUEVuWGU3UXo1cnZnbE5ycTBNeVd4YnlnZU9QNHhiZ0JaMgpEMzZzVGFFaU5QeEZzeWEyVEQ4N1R6ZjNOOUlzZGlFQzE0TFp5M1c3S2hpb1BSSTUzQXhuVTZ6ZFVXcENUejJECjNOR1ZMUUtCZ0NCczAwYmJQU1B6SUtsYURac0tGNkxUbzRQUGhVQnFKbFZYUm1VQnA2NXZNL2ZqUW5sWWtyNDMKV20wMFVFRTZvOHlsWHZnVnVzY2tjM25kWm8yUVFKOTRqTXdEYWV6cXF1M0cyQnA3VXFMblhrZ1V0bnkrK0dJcQoyQm05Uk5nSjZvUXRZYWNrYjJhaTlEa056QU1abzF4TWNLK0pWWmgzWVltM3RKOFRtZVNmCi0tLS0tRU5EIFJTQSBQUklWQVRFIEtFWS0tLS0tCg==- Jenkins Item 생성(Pipeline) : item name(k8s-cmd)

- 생성 후 빌드 진행 및 결과 확인

pipeline {

agent any

environment {

KUBECONFIG = credentials('k8s-crd')

}

stages {

stage('List Pods') {

steps {

sh '''

# Fetch and display Pods

kubectl get pods -A --kubeconfig "$KUBECONFIG"

'''

}

}

}

}- Jenkins 를 이용한 blue-green 배포 준비

- 사용했던 디플로이먼트, 서비스 삭제

kubectl delete deploy,svc timeserver- 디플로이먼트 / 서비스 yaml 파일 작성

#

cd dev-app

#

mkdir deploy

#

cat > deploy/echo-server-blue.yaml <<EOF

apiVersion: apps/v1

kind: Deployment

metadata:

name: echo-server-blue

spec:

replicas: 2

selector:

matchLabels:

app: echo-server

version: blue

template:

metadata:

labels:

app: echo-server

version: blue

spec:

containers:

- name: echo-server

image: hashicorp/http-echo

args:

- "-text=Hello from Blue"

ports:

- containerPort: 5678

EOF

cat > deploy/echo-server-service.yaml <<EOF

apiVersion: v1

kind: Service

metadata:

name: echo-server-service

spec:

selector:

app: echo-server

version: blue

ports:

- protocol: TCP

port: 80

targetPort: 5678

nodePort: 30000

type: NodePort

EOF

cat > deploy/echo-server-green.yaml <<EOF

apiVersion: apps/v1

kind: Deployment

metadata:

name: echo-server-green

spec:

replicas: 2

selector:

matchLabels:

app: echo-server

version: green

template:

metadata:

labels:

app: echo-server

version: green

spec:

containers:

- name: echo-server

image: hashicorp/http-echo

args:

- "-text=Hello from Green"

ports:

- containerPort: 5678

EOF

#

tree

git add . && git commit -m "Add echo server yaml" && git push -u origin main- 직접 블루-그린 업데이트 실행 시

#

cd deploy

kubectl delete deploy,svc --all

kubectl apply -f .

#

kubectl get deploy,svc,ep -owide

curl -s http://127.0.0.1:30000

#

kubectl patch svc echo-server-service -p '{"spec": {"selector": {"version": "green"}}}'

kubectl get deploy,svc,ep -owide

curl -s http://127.0.0.1:30000

#

kubectl patch svc echo-server-service -p '{"spec": {"selector": {"version": "blue"}}}'

kubectl get deploy,svc,ep -owide

curl -s http://127.0.0.1:30000

# 삭제

kubectl delete -f .

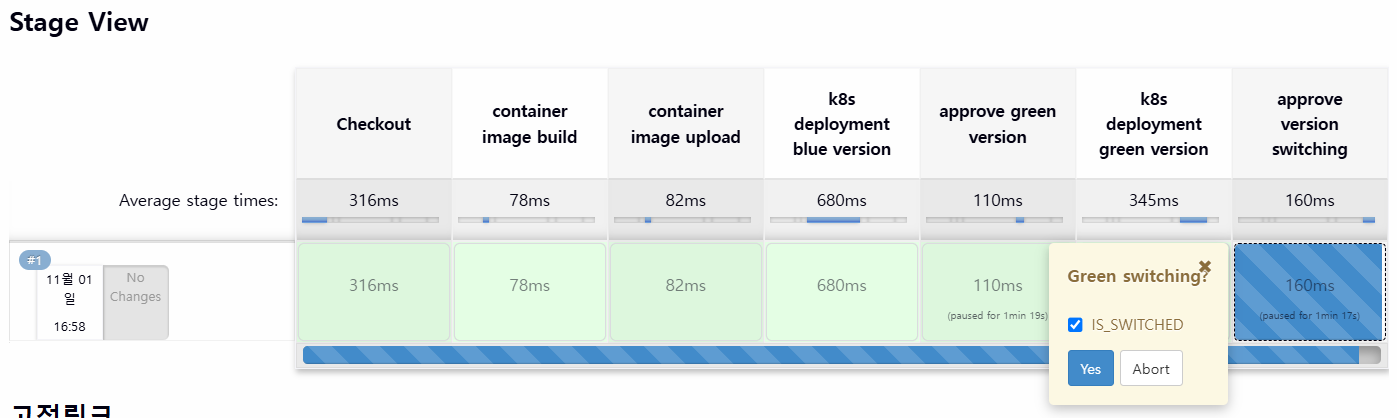

cd ..- Jenkins Item 생성(Pipeline) : item name(k8s-bluegreen) - Jenkins 통한 k8s 기본 배포

- 반복 접속 미리 실행

while true; do curl -s --connect-timeout 1 http://127.0.0.1:30000 ; echo ; sleep 1 ; kubectl get deploy -owide ; echo ; kubectl get svc,ep echo-server-service -owide ; echo "------------" ; done

혹은

while true; do curl -s --connect-timeout 1 http://127.0.0.1:30000 ; date ; echo "------------" ; sleep 1 ; done- pipeline script

pipeline {

agent any

environment {

KUBECONFIG = credentials('k8s-crd')

}

stages {

stage('Checkout') {

steps {

git branch: 'main',

url: 'http://<자신의 IP>:3000/devops/dev-app.git', // Git에서 코드 체크아웃

credentialsId: 'gogs-crd' // Credentials ID

}

}

stage('container image build') {

steps {

echo "container image build"

}

}

stage('container image upload') {

steps {

echo "container image upload"

}

}

stage('k8s deployment blue version') {

steps {

sh "kubectl apply -f ./deploy/echo-server-blue.yaml --kubeconfig $KUBECONFIG"

sh "kubectl apply -f ./deploy/echo-server-service.yaml --kubeconfig $KUBECONFIG"

}

}

stage('approve green version') {

steps {

input message: 'approve green version', ok: "Yes"

}

}

stage('k8s deployment green version') {

steps {

sh "kubectl apply -f ./deploy/echo-server-green.yaml --kubeconfig $KUBECONFIG"

}

}

stage('approve version switching') {

steps {

script {

returnValue = input message: 'Green switching?', ok: "Yes", parameters: [booleanParam(defaultValue: true, name: 'IS_SWITCHED')]

if (returnValue) {

sh "kubectl patch svc echo-server-service -p '{\"spec\": {\"selector\": {\"version\": \"green\"}}}' --kubeconfig $KUBECONFIG"

}

}

}

}

stage('Blue Rollback') {

steps {

script {

returnValue = input message: 'Blue Rollback?', parameters: [choice(choices: ['done', 'rollback'], name: 'IS_ROLLBACk')]

if (returnValue == "done") {

sh "kubectl delete -f ./deploy/echo-server-blue.yaml --kubeconfig $KUBECONFIG"

}

if (returnValue == "rollback") {

sh "kubectl patch svc echo-server-service -p '{\"spec\": {\"selector\": {\"version\": \"blue\"}}}' --kubeconfig $KUBECONFIG"

}

}

}

}

}

}- 배포 후 동작 확인

- scipt 내에 approval 정책이 있어서 선택해야만 넘어간다

- Green switching 알림에서 Yes 를 눌러주면 자동으로 전환된다

- 실습 완료 후 삭제

kubectl delete deploy echo-server-blue echo-server-green ; kubectl delete svc echo-server-service'Kubernetes' 카테고리의 다른 글

| Kubernetes CI/CD Study 1기 | 4주차 ArgoCD 1/3 (0) | 2025.11.08 |

|---|---|

| Kubernetes CI/CD Study 1기 | 3주차 #2 Jenkins + ArgoCD (0) | 2025.11.01 |

| Kubernetes CI/CD Study 1기 | 2주차 #1 : Helm (0) | 2025.10.22 |

| Kubernetes CI/CD Study 1기 | 1주차 #3 : Kustomize (0) | 2025.10.16 |

| Kubernetes CI/CD Study 1기 | 1주차 #2 Container Build Tool(Jib, Buildah, Buildpack) (1) | 2025.10.14 |